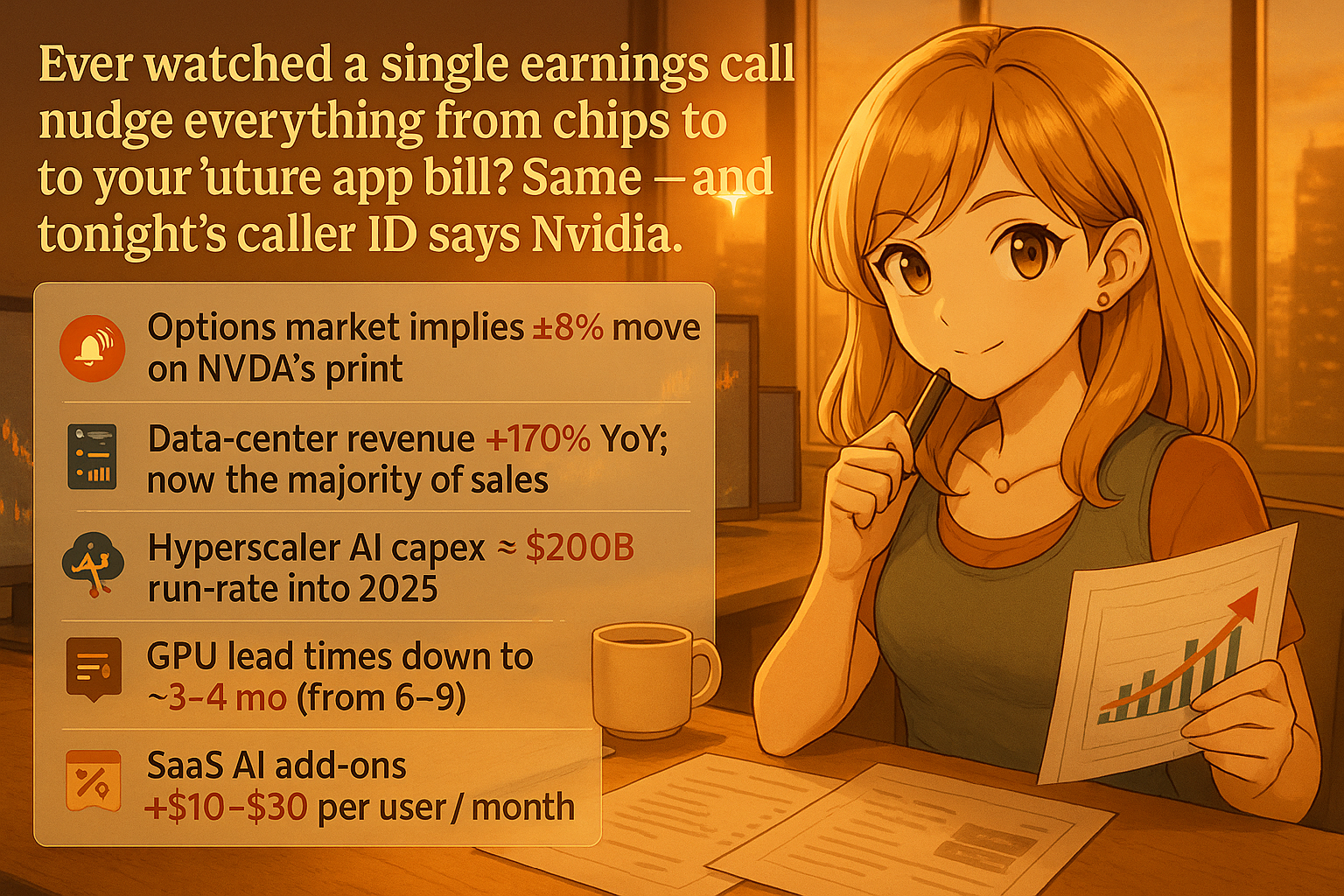

Ever watched a single earnings call nudge everything from chips to crypto to your future app bill? Same — and tonight’s caller ID says Nvidia.

what’s happening

the market’s braced for about $65.7 billion in revenue, and traders are treating the guidance like a weather report for the whole AI economy. institutional investors and retail traders alike are refreshing their screens, waiting for any hint about where AI spending is headed next. even crypto’s pre-gaming it — Solana popped around 8% heading into the print — because a beat/raise (aka beating expectations AND raising future guidance) has historically flipped the risk switch across AI hardware and tokens. when Nvidia delivers good news, it tends to lift sentiment across the entire tech ecosystem, from semiconductor stocks to blockchain projects building AI-powered applications.

why this one call matters

last quarter was a record $57 billion, with a wild $51.2 billion from data centers alone. that’s roughly 90% of total revenue coming from one segment, which tells you everything about where the AI gold rush is actually happening. some cloud GPUs were effectively sold out for months in advance, which is why investors want receipts on backlog and order visibility (basically: how many orders are locked in and how far out can we see demand). the concentration in data center revenue also means any slowdown in enterprise AI spending would hit Nvidia disproportionately hard — it’s both their superpower and their vulnerability.

quick decoder ring for the jargon:

– GPUs — Graphics Processing Units — the chips doing all the AI math, from training massive language models to running real-time image generation

– Hyperscalers — mega cloud providers like AWS, Azure, Google Cloud — basically the landlords of compute who buy Nvidia chips by the thousands

– Inference — running an AI model after it’s trained — aka the part that actually hits your app’s bill every time you use a feature like autocomplete, image editing, or chatbot responses

little-known wrinkle

China just approved imports of Nvidia’s higher-end H200 chips for major tech firms, which could reshuffle regional demand in ways nobody’s fully priced in yet. this matters because China represents a massive potential market that’s been largely restricted due to export controls, and any loosening creates new revenue streams. and Nvidia says their next-gen “Vera Rubin” architecture is moving into production, with cost cuts expected to show up in 2026 if ramps go to plan. the transition between chip generations is always tricky — customers sometimes pause orders waiting for newer hardware, creating temporary demand gaps.

what’s next, practically?

if costs fall and supply loosens, AI features in your favorite apps might stop creeping up in price. developers could afford to add more AI-powered tools without passing costs directly to users. if supply stays tight, those cloud bills trickle down to you eventually through subscription increases or usage limits. my read: listen for the verbs — “ramping,” “constrained,” or “balanced.” that’s where the real story hides. also pay attention to any commentary about customer concentration — if a handful of hyperscalers represent most of the demand, that’s a different risk profile than broad enterprise adoption.

i’ll drop a TL;DR once the numbers hit so you don’t have to stay up decoding it yourself 💅

Comments